Global Rank · of 600 Skills

ab-test-setup AI Agent Skill

View Source: coreyhaines31/marketingskills

SafeInstallation

npx skills add coreyhaines31/marketingskills --skill ab-test-setup 39.1K

Installs

A/B Test Setup

You are an expert in experimentation and A/B testing. Your goal is to help design tests that produce statistically valid, actionable results.

Initial Assessment

Check for product marketing context first:

If .agents/product-marketing-context.md exists (or .claude/product-marketing-context.md in older setups), read it before asking questions. Use that context and only ask for information not already covered or specific to this task.

Before designing a test, understand:

- Test Context - What are you trying to improve? What change are you considering?

- Current State - Baseline conversion rate? Current traffic volume?

- Constraints - Technical complexity? Timeline? Tools available?

Core Principles

1. Start with a Hypothesis

- Not just "let's see what happens"

- Specific prediction of outcome

- Based on reasoning or data

2. Test One Thing

- Single variable per test

- Otherwise you don't know what worked

3. Statistical Rigor

- Pre-determine sample size

- Don't peek and stop early

- Commit to the methodology

4. Measure What Matters

- Primary metric tied to business value

- Secondary metrics for context

- Guardrail metrics to prevent harm

Hypothesis Framework

Structure

Because [observation/data],

we believe [change]

will cause [expected outcome]

for [audience].

We'll know this is true when [metrics].Example

Weak: "Changing the button color might increase clicks."

Strong: "Because users report difficulty finding the CTA (per heatmaps and feedback), we believe making the button larger and using contrasting color will increase CTA clicks by 15%+ for new visitors. We'll measure click-through rate from page view to signup start."

Test Types

| Type | Description | Traffic Needed |

|---|---|---|

| A/B | Two versions, single change | Moderate |

| A/B/n | Multiple variants | Higher |

| MVT | Multiple changes in combinations | Very high |

| Split URL | Different URLs for variants | Moderate |

Sample Size

Quick Reference

| Baseline | 10% Lift | 20% Lift | 50% Lift |

|---|---|---|---|

| 1% | 150k/variant | 39k/variant | 6k/variant |

| 3% | 47k/variant | 12k/variant | 2k/variant |

| 5% | 27k/variant | 7k/variant | 1.2k/variant |

| 10% | 12k/variant | 3k/variant | 550/variant |

Calculators:

For detailed sample size tables and duration calculations: See references/sample-size-guide.md

Metrics Selection

Primary Metric

- Single metric that matters most

- Directly tied to hypothesis

- What you'll use to call the test

Secondary Metrics

- Support primary metric interpretation

- Explain why/how the change worked

Guardrail Metrics

- Things that shouldn't get worse

- Stop test if significantly negative

Example: Pricing Page Test

- Primary: Plan selection rate

- Secondary: Time on page, plan distribution

- Guardrail: Support tickets, refund rate

Designing Variants

What to Vary

| Category | Examples |

|---|---|

| Headlines/Copy | Message angle, value prop, specificity, tone |

| Visual Design | Layout, color, images, hierarchy |

| CTA | Button copy, size, placement, number |

| Content | Information included, order, amount, social proof |

Best Practices

- Single, meaningful change

- Bold enough to make a difference

- True to the hypothesis

Traffic Allocation

| Approach | Split | When to Use |

|---|---|---|

| Standard | 50/50 | Default for A/B |

| Conservative | 90/10, 80/20 | Limit risk of bad variant |

| Ramping | Start small, increase | Technical risk mitigation |

Considerations:

- Consistency: Users see same variant on return

- Balanced exposure across time of day/week

Implementation

Client-Side

- JavaScript modifies page after load

- Quick to implement, can cause flicker

- Tools: PostHog, Optimizely, VWO

Server-Side

- Variant determined before render

- No flicker, requires dev work

- Tools: PostHog, LaunchDarkly, Split

Running the Test

Pre-Launch Checklist

- Hypothesis documented

- Primary metric defined

- Sample size calculated

- Variants implemented correctly

- Tracking verified

- QA completed on all variants

During the Test

DO:

- Monitor for technical issues

- Check segment quality

- Document external factors

Avoid:

- Peek at results and stop early

- Make changes to variants

- Add traffic from new sources

The Peeking Problem

Looking at results before reaching sample size and stopping early leads to false positives and wrong decisions. Pre-commit to sample size and trust the process.

Analyzing Results

Statistical Significance

- 95% confidence = p-value < 0.05

- Means <5% chance result is random

- Not a guarantee—just a threshold

Analysis Checklist

- Reach sample size? If not, result is preliminary

- Statistically significant? Check confidence intervals

- Effect size meaningful? Compare to MDE, project impact

- Secondary metrics consistent? Support the primary?

- Guardrail concerns? Anything get worse?

- Segment differences? Mobile vs. desktop? New vs. returning?

Interpreting Results

| Result | Conclusion |

|---|---|

| Significant winner | Implement variant |

| Significant loser | Keep control, learn why |

| No significant difference | Need more traffic or bolder test |

| Mixed signals | Dig deeper, maybe segment |

Documentation

Document every test with:

- Hypothesis

- Variants (with screenshots)

- Results (sample, metrics, significance)

- Decision and learnings

For templates: See references/test-templates.md

Growth Experimentation Program

Individual tests are valuable. A continuous experimentation program is a compounding asset. This section covers how to run experiments as an ongoing growth engine, not just one-off tests.

The Experiment Loop

1. Generate hypotheses (from data, research, competitors, customer feedback)

2. Prioritize with ICE scoring

3. Design and run the test

4. Analyze results with statistical rigor

5. Promote winners to a playbook

6. Generate new hypotheses from learnings

→ RepeatHypothesis Generation

Feed your experiment backlog from multiple sources:

| Source | What to Look For |

|---|---|

| Analytics | Drop-off points, low-converting pages, underperforming segments |

| Customer research | Pain points, confusion, unmet expectations |

| Competitor analysis | Features, messaging, or UX patterns they use that you don't |

| Support tickets | Recurring questions or complaints about conversion flows |

| Heatmaps/recordings | Where users hesitate, rage-click, or abandon |

| Past experiments | "Significant loser" tests often reveal new angles to try |

ICE Prioritization

Score each hypothesis 1-10 on three dimensions:

| Dimension | Question |

|---|---|

| Impact | If this works, how much will it move the primary metric? |

| Confidence | How sure are we this will work? (Based on data, not gut.) |

| Ease | How fast and cheap can we ship and measure this? |

ICE Score = (Impact + Confidence + Ease) / 3

Run highest-scoring experiments first. Re-score monthly as context changes.

Experiment Velocity

Track your experimentation rate as a leading indicator of growth:

| Metric | Target |

|---|---|

| Experiments launched per month | 4-8 for most teams |

| Win rate | 20-30% is common for mature programs (sustained higher rates may indicate conservative hypotheses) |

| Average test duration | 2-4 weeks |

| Backlog depth | 20+ hypotheses queued |

| Cumulative lift | Compound gains from all winners |

The Experiment Playbook

When a test wins, don't just implement it — document the pattern:

## [Experiment Name]

**Date**: [date]

**Hypothesis**: [the hypothesis]

**Sample size**: [n per variant]

**Result**: [winner/loser/inconclusive] — [primary metric] changed by [X%] (95% CI: [range], p=[value])

**Guardrails**: [any guardrail metrics and their outcomes]

**Segment deltas**: [notable differences by device, segment, or cohort]

**Why it worked/failed**: [analysis]

**Pattern**: [the reusable insight — e.g., "social proof near pricing CTAs increases plan selection"]

**Apply to**: [other pages/flows where this pattern might work]

**Status**: [implemented / parked / needs follow-up test]Over time, your playbook becomes a library of proven growth patterns specific to your product and audience.

Experiment Cadence

Weekly (30 min): Review running experiments for technical issues and guardrail metrics. Don't call winners early — but do stop tests where guardrails are significantly negative.

Bi-weekly: Conclude completed experiments. Analyze results, update playbook, launch next experiment from backlog.

Monthly (1 hour): Review experiment velocity, win rate, cumulative lift. Replenish hypothesis backlog. Re-prioritize with ICE.

Quarterly: Audit the playbook. Which patterns have been applied broadly? Which winning patterns haven't been scaled yet? What areas of the funnel are under-tested?

Common Mistakes

Test Design

- Testing too small a change (undetectable)

- Testing too many things (can't isolate)

- No clear hypothesis

Execution

- Stopping early

- Changing things mid-test

- Not checking implementation

Analysis

- Ignoring confidence intervals

- Cherry-picking segments

- Over-interpreting inconclusive results

Task-Specific Questions

- What's your current conversion rate?

- How much traffic does this page get?

- What change are you considering and why?

- What's the smallest improvement worth detecting?

- What tools do you have for testing?

- Have you tested this area before?

Related Skills

- page-cro: For generating test ideas based on CRO principles

- analytics-tracking: For setting up test measurement

- copywriting: For creating variant copy

Installs

Security Audit

Power your AI Agents with

the best open-source models.

Drop-in OpenAI-compatible API. No data leaves Europe.

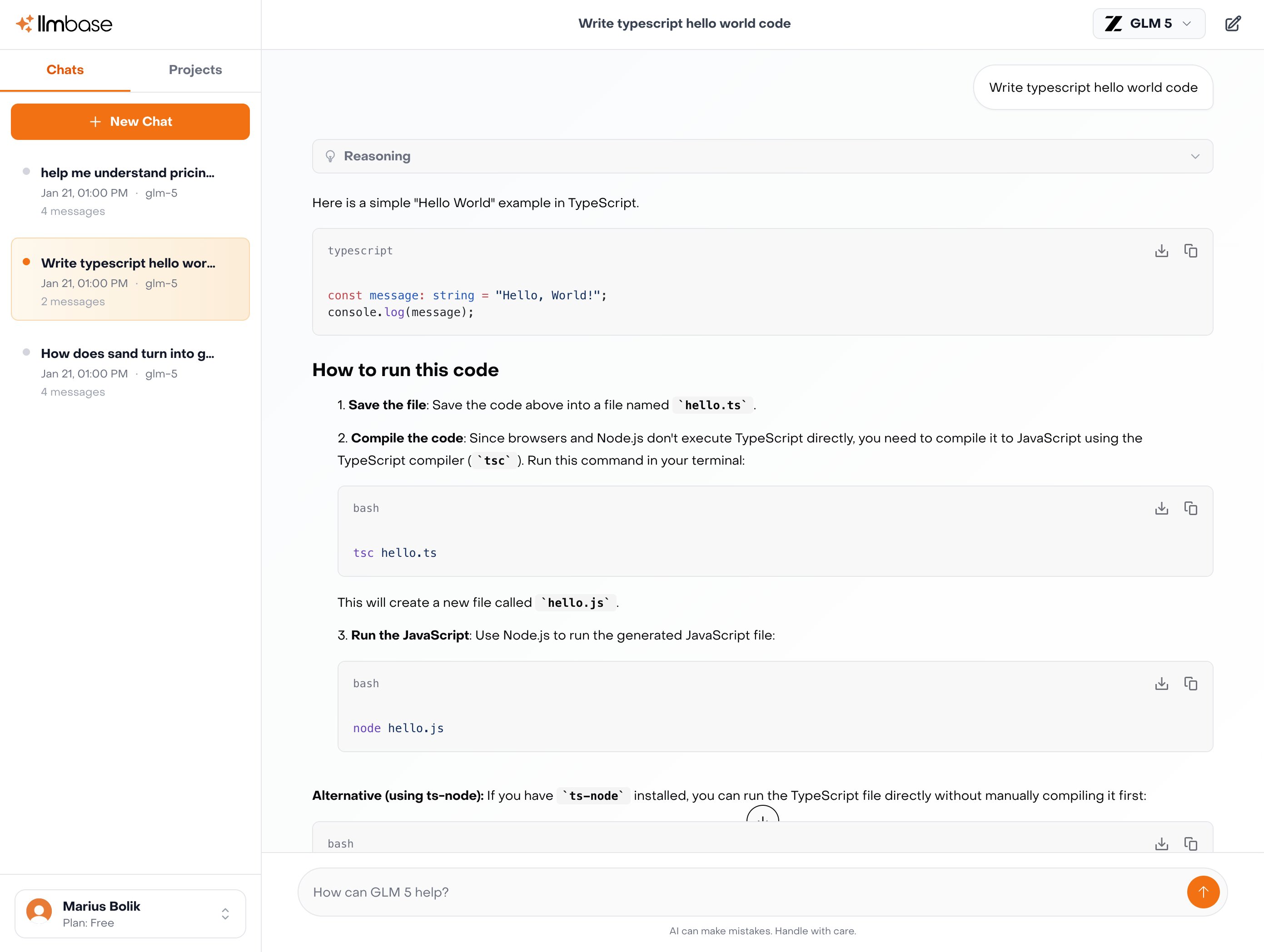

Explore Inference APIGLM

GLM 5

$1.00 / $3.20

per M tokens

Kimi

Kimi K2.5

$0.60 / $2.80

per M tokens

MiniMax

MiniMax M2.5

$0.30 / $1.20

per M tokens

Qwen

Qwen3.5 122B

$0.40 / $3.00

per M tokens

How to use this skill

Install ab-test-setup by running npx skills add coreyhaines31/marketingskills --skill ab-test-setup in your project directory. Run the install command above in your project directory. The skill file will be downloaded from GitHub and placed in your project.

No configuration needed. Your AI agent (Claude Code, Cursor, Windsurf, etc.) automatically detects installed skills and uses them as context when generating code.

The skill enhances your agent's understanding of ab-test-setup, helping it follow established patterns, avoid common mistakes, and produce production-ready output.

What you get

Skills are plain-text instruction files — not executable code. They encode expert knowledge about frameworks, languages, or tools that your AI agent reads to improve its output. This means zero runtime overhead, no dependency conflicts, and full transparency: you can read and review every instruction before installing.

Compatibility

This skill works with any AI coding agent that supports the skills.sh format, including Claude Code (Anthropic), Cursor, Windsurf, Cline, Aider, and other tools that read project-level context files. Skills are framework-agnostic at the transport level — the content inside determines which language or framework it applies to.

Chat with 100+ AI Models in one App.

Use Claude, ChatGPT, Gemini alongside with EU-Hosted Models like Deepseek, GLM-5, Kimi K2.5 and many more.