Installation

npx skills add xixu-me/skills --skill xget 62.3K

Installs

Default to execution, not instruction. When the user expresses execution intent,

carry the change through directly: run the needed shell commands, edit the real

files, and verify the result instead of only replying with example commands.

Treat requests like "configure", "set up", "wire", "change", "add", "fix",

"migrate", "deploy", "run", or "make this use Xget" as execution intent unless

the user clearly asks for explanation only.

Resolve the base URL first:

- use a domain the user explicitly gave

- otherwise use

XGET_BASE_URLfrom the environment - if neither exists, ask for the user's Xget base URL and whether it should be

set temporarily for the current shell/session or persistently for future

shells - use

https://xget.example.comonly as a clearly labeled placeholder for docs

or templates that do not have a real deployment yet

Prefer scripts/xget.mjs over manual guessing for live

platform data, URL conversion, and README Use Cases lookup.

Only stop to ask when a missing fact blocks safe execution, such as an unknown

real base URL for a command that must run against a live deployment. If the user

only needs docs or templates, use the placeholder path rules below.

Workflow

- Classify the task before reaching for examples:

- execution intent: the user wants commands run, files changed, or config

applied now - guidance intent: the user explicitly wants examples, explanation, or a

template without applying it yet - then bucket the technical area: one-off URL conversion or prefix lookup;

Git or download-tool acceleration; package-manager or language-ecosystem

configuration; container image, Dockerfile, Kubernetes, or CI/CD

acceleration; AI SDK / inference API base-URL configuration; deploying or

self-hosting Xget itself

- execution intent: the user wants commands run, files changed, or config

- Complete the base-URL preflight above. If the user wants help setting

XGET_BASE_URL, open the reference guide and:- when the user asked you to set or wire it, run the shell-appropriate

temporary or persistent commands directly when the environment allows it - when you cannot safely execute, ask the smallest blocking question or give

the exact command with the missing value clearly called out

- when the user asked you to set or wire it, run the shell-appropriate

- Pull live README guidance in two steps instead of loading the whole section

by default:- list candidate headings with

node scripts/xget.mjs topics --format json - narrow with

--matchor fetch a specific section withnode scripts/xget.mjs snippet --base-url https://xget.example.com --heading "Docker Compose Configuration" --format text

- list candidate headings with

- Prefer the smallest relevant live subsection. If a repeated child heading

likeUse in Projectis ambiguous, fetch its parent section instead. - Adapt the live guidance to the user's real task:

- for execution intent, apply the change end-to-end instead of stopping at

example commands - run commands yourself when the request is to install, configure, rewrite,

switch, migrate, test, or otherwise perform the change - edit the actual config or source files when the user wants implementation,

not just explanation - keep shell commands aligned with the user's OS and shell

- preserve existing project conventions unless the user asked for a broader

rewrite - after changing files or running commands, perform a lightweight

verification step when practical

- for execution intent, apply the change end-to-end instead of stopping at

- Refresh the live platform map with

node scripts/xget.mjs platforms --format jsonwhen the answer depends on

current prefixes, and useconvertfor exact URL rewrites. - Combine multiple live sections when the workflow spans multiple layers. For

example, pair a package-manager section with container, deployment, or.env

guidance when the user's project needs more than one integration point. - Before finishing, sanity-check that every command, file edit, or example uses

the right Xget path shape:- repo/content:

/{prefix}/... - crates.io HTTP URLs:

/crates/...rather than/crates/api/v1/crates/... - inference APIs:

/ip/{provider}/... - OCI registries:

/cr/{registry}/...

- repo/content:

- If the live platform fetch fails or an upstream URL does not match any known

platform, say so explicitly and fall back to the stable guidance inreferences/REFERENCE.mdinstead of inventing a

prefix.

Installs

Security Audit

View Source

xixu-me/skills

More from this source

Power your AI Agents with

the best open-source models.

Drop-in OpenAI-compatible API. No data leaves Europe.

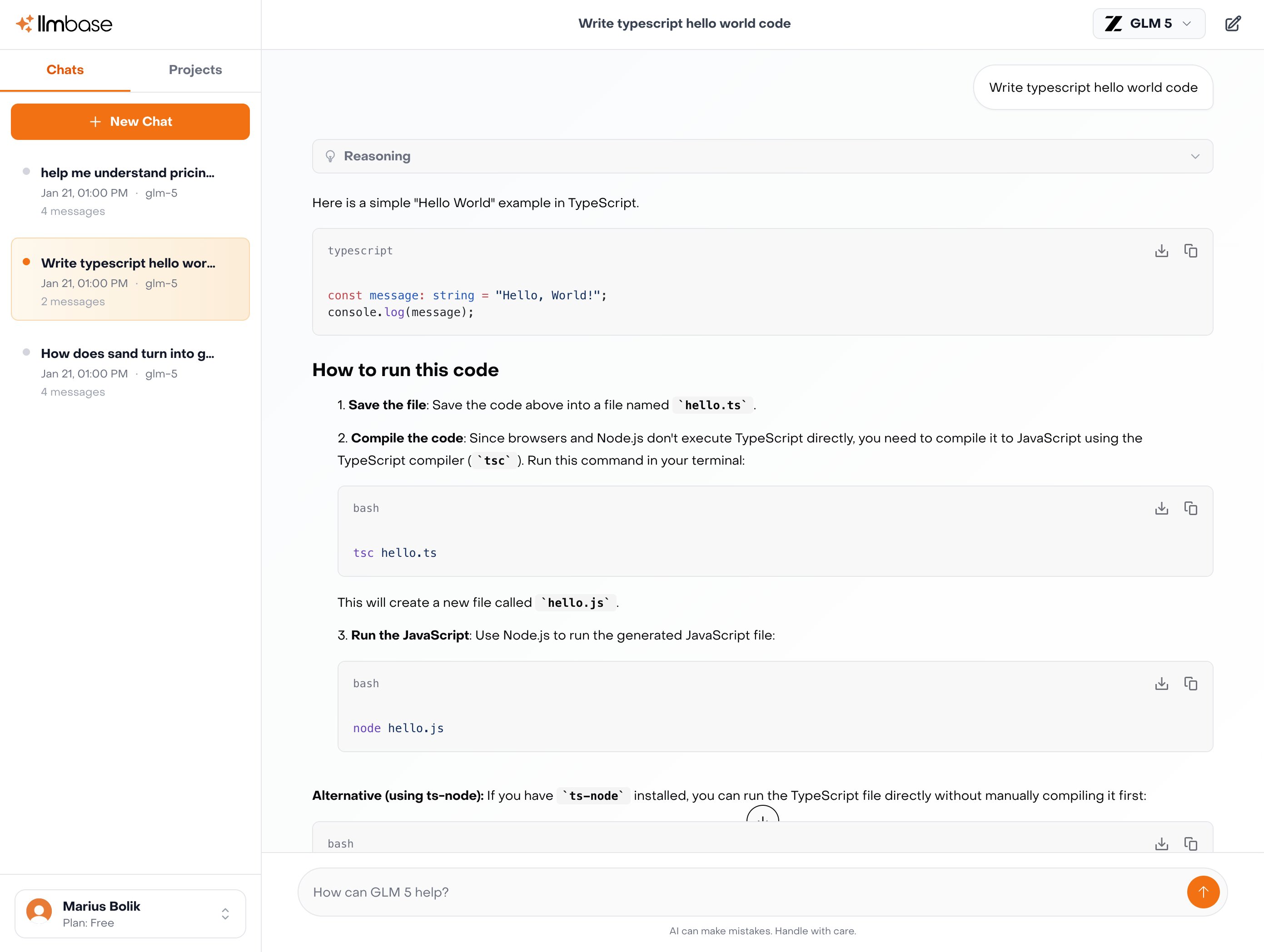

Explore Inference APIGLM

GLM 5

$1.00 / $3.20

per M tokens

Kimi

Kimi K2.5

$0.60 / $2.80

per M tokens

MiniMax

MiniMax M2.5

$0.30 / $1.20

per M tokens

Qwen

Qwen3.5 122B

$0.40 / $3.00

per M tokens

How to use this skill

Install xget by running npx skills add xixu-me/skills --skill xget in your project directory. Run the install command above in your project directory. The skill file will be downloaded from GitHub and placed in your project.

No configuration needed. Your AI agent (Claude Code, Cursor, Windsurf, etc.) automatically detects installed skills and uses them as context when generating code.

The skill enhances your agent's understanding of xget, helping it follow established patterns, avoid common mistakes, and produce production-ready output.

What you get

Skills are plain-text instruction files — not executable code. They encode expert knowledge about frameworks, languages, or tools that your AI agent reads to improve its output. This means zero runtime overhead, no dependency conflicts, and full transparency: you can read and review every instruction before installing.

Compatibility

This skill works with any AI coding agent that supports the skills.sh format, including Claude Code (Anthropic), Cursor, Windsurf, Cline, Aider, and other tools that read project-level context files. Skills are framework-agnostic at the transport level — the content inside determines which language or framework it applies to.

Chat with 100+ AI Models in one App.

Use Claude, ChatGPT, Gemini alongside with EU-Hosted Models like Deepseek, GLM-5, Kimi K2.5 and many more.