LLMs nach Kategorien

Top KI-Modelle nach Kategorie

Vergleiche aktuelle Modelle aus Open Source, proprietär, uncensored, Coding, Mathe, Geschwindigkeit und Neuerscheinungen.

Meistgenutzte KI-Modelle

Beliebte Modelle auf OpenRouter.

MiMo-V2-Pro

Xiaomi

MiMo-V2-Pro is Xiaomi's flagship foundation model, featuring over 1T total parameters and a 1M context length, deeply optimized for agentic scenarios.

MiniMax M2.5

MiniMax

MiniMax-M2.5 is a SOTA large language model designed for real-world productivity.

MiniMax M2.7

MiniMax

MiniMax-M2.7 is a next-generation large language model designed for autonomous, real-world productivity and continuous improvement.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI’s latest frontier model, unifying the Codex and GPT lines into a single system.

Gemini 3.1 Pro Preview

Gemini 3.1 Pro Preview is Google’s frontier reasoning model, delivering enhanced software engineering performance, improved agentic reliability, and more efficient token usage across complex workflows.

GLM 5.1

NEWZ.ai

GLM-5.1 delivers a major leap in coding capability, with particularly significant gains in handling long-horizon tasks.

Kimi K2.5

MoonshotAI

Kimi K2.5 is Moonshot AI's native multimodal model, delivering state-of-the-art visual coding capability and a self-directed agent swarm paradigm.

Step 3.5 Flash

StepFun

Step 3.5 Flash is StepFun's most capable open-source foundation model.

Gemini 3.1 Flash Lite Preview

Gemini 3.1 Flash Lite Preview is Google's high-efficiency model optimized for high-volume use cases.

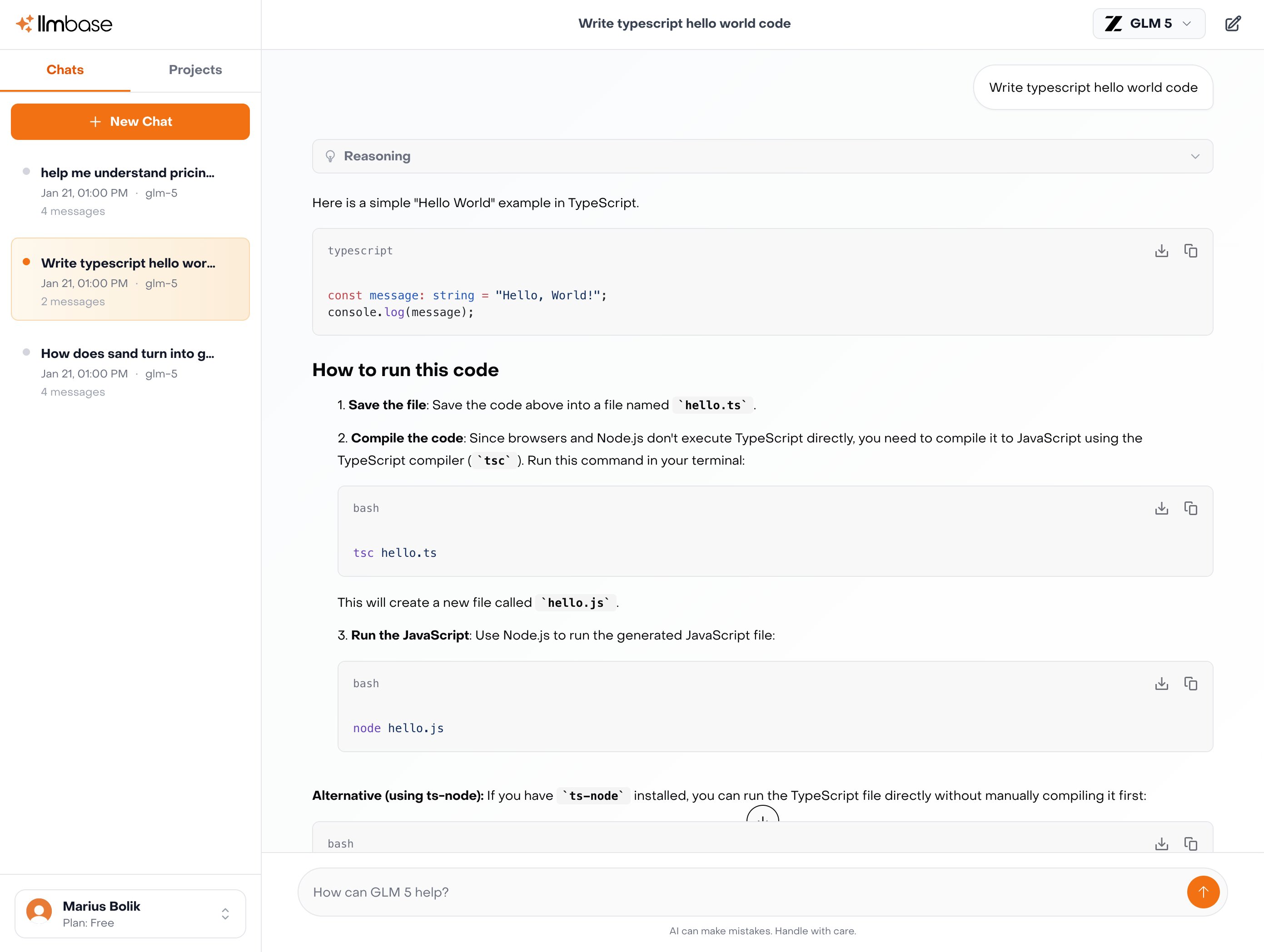

GLM 5 Turbo

Z.ai

GLM-5 Turbo is a new model from Z.ai designed for fast inference and strong performance in agent-driven environments such as OpenClaw scenarios.

Top Open-Source-KI-Modelle

Community-getrieben und transparent.

GLM 5.1

NEWZ.ai

GLM-5.1 delivers a major leap in coding capability, with particularly significant gains in handling long-horizon tasks.

Qwen3.5 Plus

Qwen

The Qwen3.5 native vision-language series Plus models are built on a hybrid architecture that integrates linear attention mechanisms with sparse mixture-of-experts models, achieving higher inference efficiency.

MiniMax M2.7

MiniMax

MiniMax-M2.7 is a next-generation large language model designed for autonomous, real-world productivity and continuous improvement.

MiMo-V2-Pro

Xiaomi

MiMo-V2-Pro is Xiaomi's flagship foundation model, featuring over 1T total parameters and a 1M context length, deeply optimized for agentic scenarios.

Kimi K2.5

MoonshotAI

Kimi K2.5 is Moonshot AI's native multimodal model, delivering state-of-the-art visual coding capability and a self-directed agent swarm paradigm.

GLM 5 Turbo

Z.ai

GLM-5 Turbo is a new model from Z.ai designed for fast inference and strong performance in agent-driven environments such as OpenClaw scenarios.

Qwen3.5 397B A17B

Qwen

The Qwen3.5 series 397B-A17B native vision-language model is built on a hybrid architecture that integrates a linear attention mechanism with a sparse mixture-of-experts model, achieving higher inference efficiency.

MiMo-V2-Omni

Xiaomi

MiMo-V2-Omni is a frontier omni-modal model that natively processes image, video, and audio inputs within a unified architecture.

Qwen3.5-35B-A3B

Qwen

The Qwen3.5 Series 35B-A3B is a native vision-language model designed with a hybrid architecture that integrates linear attention mechanisms and a sparse mixture-of-experts model, achieving higher inference efficiency.

GLM 5V Turbo

Z.ai

GLM-5V-Turbo is Z.ai’s first native multimodal agent foundation model, built for vision-based coding and agent-driven tasks.

Top Proprietäre KI-Modelle

Führende Closed-Source-Modelle.

Claude Opus 4.6 (Fast)

NEWAnthropic

Fast-mode variant of [Opus 4.6](/anthropic/claude-opus-4.6) - identical capabilities with higher output speed at premium 6x pricing.

Gemini 3.1 Pro Preview

Gemini 3.1 Pro Preview is Google’s frontier reasoning model, delivering enhanced software engineering performance, improved agentic reliability, and more efficient token usage across complex workflows.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI’s latest frontier model, unifying the Codex and GPT lines into a single system.

GPT-5.3-Codex

OpenAI

GPT-5.3-Codex is OpenAI’s most advanced agentic coding model, combining the frontier software engineering performance of GPT-5.2-Codex with the broader reasoning and professional knowledge capabilities of GPT-5.2.

Grok 4.20

xAI

Grok 4.20 is xAI's newest flagship model with industry-leading speed and agentic tool calling capabilities.

GPT-5.4 Mini

OpenAI

GPT-5.4 mini brings the core capabilities of GPT-5.4 to a faster, more efficient model optimized for high-throughput workloads.

GPT-5.1-Codex-Max

OpenAI

GPT-5.1-Codex-Max is OpenAI’s latest agentic coding model, designed for long-running, high-context software development tasks.

Gemini 3.1 Flash Lite Preview

Gemini 3.1 Flash Lite Preview is Google's high-efficiency model optimized for high-volume use cases.

GPT-5.1-Codex-Mini

OpenAI

GPT-5.1-Codex-Mini is a smaller and faster version of GPT-5.1-Codex

Gemma 4 26B A4B

Gemma 4 26B A4B IT is an instruction-tuned Mixture-of-Experts (MoE) model from Google DeepMind.

Top Coding-KI-Modelle

Optimiert für Code und Entwickler-Workflows.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI’s latest frontier model, unifying the Codex and GPT lines into a single system.

Gemini 3.1 Pro Preview

Gemini 3.1 Pro Preview is Google’s frontier reasoning model, delivering enhanced software engineering performance, improved agentic reliability, and more efficient token usage across complex workflows.

GPT-5.3-Codex

OpenAI

GPT-5.3-Codex is OpenAI’s most advanced agentic coding model, combining the frontier software engineering performance of GPT-5.2-Codex with the broader reasoning and professional knowledge capabilities of GPT-5.2.

Claude Opus 4.6 (Fast)

NEWAnthropic

Fast-mode variant of [Opus 4.6](/anthropic/claude-opus-4.6) - identical capabilities with higher output speed at premium 6x pricing.

GPT-5.4 Mini

OpenAI

GPT-5.4 mini brings the core capabilities of GPT-5.4 to a faster, more efficient model optimized for high-throughput workloads.

GPT-5.1-Codex-Max

OpenAI

GPT-5.1-Codex-Max is OpenAI’s latest agentic coding model, designed for long-running, high-context software development tasks.

GLM 5.1

NEWZ.ai

GLM-5.1 delivers a major leap in coding capability, with particularly significant gains in handling long-horizon tasks.

Qwen3.5 Plus

Qwen

The Qwen3.5 native vision-language series Plus models are built on a hybrid architecture that integrates linear attention mechanisms with sparse mixture-of-experts models, achieving higher inference efficiency.

Gemini 3.1 Flash Lite Preview

Gemini 3.1 Flash Lite Preview is Google's high-efficiency model optimized for high-volume use cases.

Grok 4.20

xAI

Grok 4.20 is xAI's newest flagship model with industry-leading speed and agentic tool calling capabilities.

Top OCR-KI-Modelle

Spezialisiert auf Texterkennung und Dokument-Extraktion.

PaddleOCR-VL-0.9B

PaddlePaddle

Baidu's 0.9B vision-language OCR model combining a NaViT-style dynamic-resolution encoder with ERNIE-4.5-0.3B. Handles multilingual text, tables, charts, and formulas across 16K context — optimized for efficient on-device document parsing.

olmOCR-2-7B

AllenAI

Allen AI's 7B OCR model fine-tuned from Qwen2.5-VL-7B on curated academic papers and technical documentation. Supports 128K context and extracts structured text from PDFs and scanned documents with high fidelity.

DeepSeek-OCR

DeepSeek

DeepSeek's ~3B MoE OCR model using optical context compression to encode full pages into compact token sequences. Outputs structured Markdown preserving text layout, tables, and mathematical formulas from images and PDFs.

Mistral OCR

Mistral AI

Mistral's dedicated document understanding model (December 2025). Processes PDFs and images page-by-page via API, returning structured Markdown with preserved tables, equations, image bounding boxes, and rich layout metadata.

Top Mathe-KI-Modelle

Spezialisten für Mathematik und Reasoning.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI’s latest frontier model, unifying the Codex and GPT lines into a single system.

GPT-5.3-Codex

OpenAI

GPT-5.3-Codex is OpenAI’s most advanced agentic coding model, combining the frontier software engineering performance of GPT-5.2-Codex with the broader reasoning and professional knowledge capabilities of GPT-5.2.

Gemini 3.1 Flash Lite Preview

Gemini 3.1 Flash Lite Preview is Google's high-efficiency model optimized for high-volume use cases.

DeepSeek V3.2 Speciale

DeepSeek

DeepSeek-V3.2-Speciale is a high-compute variant of DeepSeek-V3.2 optimized for maximum reasoning and agentic performance.

MiMo-V2-Flash

Xiaomi

MiMo-V2-Flash is an open-source foundation language model developed by Xiaomi.

GPT-5.1-Codex-Mini

OpenAI

GPT-5.1-Codex-Mini is a smaller and faster version of GPT-5.1-Codex

Gemini 3.1 Pro Preview

Gemini 3.1 Pro Preview is Google’s frontier reasoning model, delivering enhanced software engineering performance, improved agentic reliability, and more efficient token usage across complex workflows.

GLM 4 32B

Z.ai

GLM 4 32B is a cost-effective foundation language model.

Kimi K2 Thinking

MoonshotAI

Kimi K2 Thinking is Moonshot AI’s most advanced open reasoning model to date, extending the K2 series into agentic, long-horizon reasoning.

GPT-5.1-Codex-Max

OpenAI

GPT-5.1-Codex-Max is OpenAI’s latest agentic coding model, designed for long-running, high-context software development tasks.

Schnelle KI-Modelle

Niedrige Kosten bei geringer Latenz.

Mercury 2

Inception

Mercury 2 is an extremely fast reasoning LLM, and the first reasoning diffusion LLM (dLLM).

Gemini 3.1 Flash Lite Preview

Gemini 3.1 Flash Lite Preview is Google's high-efficiency model optimized for high-volume use cases.

gpt-oss-safeguard-20b

OpenAI

gpt-oss-safeguard-20b is a safety reasoning model from OpenAI built upon gpt-oss-20b.

Ministral 3 3B 2512

Mistral

The smallest model in the Ministral 3 family, Ministral 3 3B is a powerful, efficient tiny language model with vision capabilities.

Qwen3.5-Flash

Qwen

The Qwen3.5 native vision-language Flash models are built on a hybrid architecture that integrates a linear attention mechanism with a sparse mixture-of-experts model, achieving higher inference efficiency.

Qwen3.5-27B

Qwen

The Qwen3.5 27B native vision-language Dense model incorporates a linear attention mechanism, delivering fast response times while balancing inference speed and performance.

Qwen3.5-35B-A3B

Qwen

The Qwen3.5 Series 35B-A3B is a native vision-language model designed with a hybrid architecture that integrates linear attention mechanisms and a sparse mixture-of-experts model, achieving higher inference efficiency.

Grok 4.20

xAI

Grok 4.20 is xAI's newest flagship model with industry-leading speed and agentic tool calling capabilities.

Mistral Small 4

Mistral

Mistral Small 4 is the next major release in the Mistral Small family, unifying the capabilities of several flagship Mistral models into a single system.

GPT-5.1-Codex-Mini

OpenAI

GPT-5.1-Codex-Mini is a smaller and faster version of GPT-5.1-Codex

Top Bildgenerierungs-KI-Modelle

Modelle, die aus Textprompts Bilder erzeugen.

Nano Banana 2 (Gemini 3.1 Flash Image Preview)

Gemini 3.1 Flash Image Preview, a.k.a.

Seedream 4.5

ByteDance Seed

Seedream 4.5 is the latest in-house image generation model developed by ByteDance.

Riverflow V2 Fast

Sourceful

Riverflow V2 Fast is the fastest variant of Sourceful's Riverflow 2.0 lineup, best for production deployments and latency-critical workflows.

Riverflow V2 Pro

Sourceful

Riverflow V2 Pro is the most powerful variant of Sourceful's Riverflow 2.0 lineup, best for top-tier control and perfect text rendering.

Riverflow V2 Standard Preview

Sourceful

Riverflow V2 Standard Preview is the standard variant of Sourceful's Riverflow V2 preview lineup.

FLUX.2 Pro

Black Forest Labs

A high-end image generation and editing model focused on frontier-level visual quality and reliability.

FLUX.2 Max

Black Forest Labs

FLUX.2 [max] is the new top-tier image model from Black Forest Labs, pushing image quality, prompt understanding, and editing consistency to the highest level yet.

Riverflow V2 Max Preview

Sourceful

Riverflow V2 Max Preview is the most powerful variant of Sourceful's Riverflow V2 preview lineup.

FLUX.2 Klein 4B

Black Forest Labs

FLUX.2 [klein] 4B is the fastest and most cost-effective model in the FLUX.2 family, optimized for high-throughput use cases while maintaining excellent image quality.

FLUX.2 Flex

Black Forest Labs

FLUX.2 [flex] excels at rendering complex text, typography, and fine details, and supports multi-reference editing in the same unified architecture.

Top Audio-KI-Modelle

Modelle mit Sprach- und Audio-Ausgabe.

GPT Audio Mini

OpenAI

A cost-efficient version of GPT Audio.

GPT Audio

OpenAI

The gpt-audio model is OpenAI's first generally available audio model.

KI-Modelle mit großem Kontextfenster

Modelle mit 200K+ Kontextfenstern.

Grok 4.20

xAI

Grok 4.20 is xAI's newest flagship model with industry-leading speed and agentic tool calling capabilities.

Grok 4.20 Multi-Agent

xAI

Grok 4.20 Multi-Agent is a variant of xAI’s Grok 4.20 designed for collaborative, agent-based workflows.

GPT-5.4

OpenAI

GPT-5.4 is OpenAI’s latest frontier model, unifying the Codex and GPT lines into a single system.

GPT-5.4 Pro

OpenAI

GPT-5.4 Pro is OpenAI's most advanced model, building on GPT-5.4's unified architecture with enhanced reasoning capabilities for complex, high-stakes tasks.

MiMo-V2-Pro

Xiaomi

MiMo-V2-Pro is Xiaomi's flagship foundation model, featuring over 1T total parameters and a 1M context length, deeply optimized for agentic scenarios.

Gemini 3.1 Pro Preview

Gemini 3.1 Pro Preview is Google’s frontier reasoning model, delivering enhanced software engineering performance, improved agentic reliability, and more efficient token usage across complex workflows.

Gemini 3.1 Flash Lite Preview

Gemini 3.1 Flash Lite Preview is Google's high-efficiency model optimized for high-volume use cases.

Gemini 3.1 Pro Preview Custom Tools

Gemini 3.1 Pro Preview Custom Tools is a variant of Gemini 3.1 Pro that improves tool selection behavior by preventing overuse of a general bash tool when more efficient third-party or user-defined functions are available.

Palmyra X5

Writer

Palmyra X5 is Writer's most advanced model, purpose-built for building and scaling AI agents across the enterprise.

Qwen3.5-Flash

Qwen

The Qwen3.5 native vision-language Flash models are built on a hybrid architecture that integrates a linear attention mechanism with a sparse mixture-of-experts model, achieving higher inference efficiency.

Top Uncensored KI-Modelle

Leicht gefilterte Modelle mit hoher Flexibilität.

Neueste KI-Modelle

Frische Releases auf OpenRouter.

Claude Opus 4.6 (Fast)

NEWAnthropic

Fast-mode variant of [Opus 4.6](/anthropic/claude-opus-4.6) - identical capabilities with higher output speed at premium 6x pricing.

GLM 5.1

NEWZ.ai

GLM-5.1 delivers a major leap in coding capability, with particularly significant gains in handling long-horizon tasks.

Gemma 4 26B A4B

Gemma 4 26B A4B IT is an instruction-tuned Mixture-of-Experts (MoE) model from Google DeepMind.

GLM 5V Turbo

Z.ai

GLM-5V-Turbo is Z.ai’s first native multimodal agent foundation model, built for vision-based coding and agent-driven tasks.

Trinity Large Thinking

Arcee AI

Trinity Large Thinking is a powerful open source reasoning model from the team at Arcee AI.

Grok 4.20 Multi-Agent

xAI

Grok 4.20 Multi-Agent is a variant of xAI’s Grok 4.20 designed for collaborative, agent-based workflows.

Grok 4.20

xAI

Grok 4.20 is xAI's newest flagship model with industry-leading speed and agentic tool calling capabilities.

KAT-Coder-Pro V2

Kwaipilot

KAT-Coder-Pro V2 is the latest high-performance model in KwaiKAT’s KAT-Coder series, designed for complex enterprise-grade software engineering and SaaS integration.

Reka Edge

rekaai

Reka Edge is an extremely efficient 7B multimodal vision-language model that accepts image/video+text inputs and generates text outputs.

MiMo-V2-Omni

Xiaomi

MiMo-V2-Omni is a frontier omni-modal model that natively processes image, video, and audio inputs within a unified architecture.

Chat with 100+ AI Models in one App.

Use Claude, ChatGPT, Gemini alongside with EU-Hosted Models like Deepseek, GLM-5, Kimi K2.5 and many more.

So findest du das richtige KI-Modell

Ein praktischer Leitfaden für die Modellwahl nach Use Case.

Modell an Aufgabe anpassen

Allzweckmodelle wie GPT-4o und Claude Sonnet funktionieren für viele Aufgaben gut. Für Spezialfälle sind Coding-Modelle (DeepSeek Coder, Codestral) und Mathe-Modelle (QwQ, DeepSeek R1) oft präziser und günstiger pro Token.

Kontextfenster berücksichtigen

Für lange Dokumente, Codebasen oder lange Konversationen ist die Kontextgröße entscheidend. Modelle reichen von 8K bis über 1M Token. Größere Fenster erlauben mehr Input, erhöhen aber häufig Kosten und Latenz.

Kosten, Geschwindigkeit und Qualität balancieren

Frontier-Modelle liefern Top-Benchmarkwerte, sind aber teurer und oft langsamer. Schnelle Modelle wie Gemini Flash, Llama 3 (8B) und Mistral Small sind für hohe Last oft deutlich günstiger und reagieren schneller.

Open Source vs. proprietär

Open-Source-Modelle (Llama, Mistral, Qwen, DeepSeek) bieten Self-Hosting und Anpassbarkeit. Proprietäre Modelle (GPT-4o, Claude, Gemini) führen häufig bei Benchmarks. Viele Teams kombinieren beide Ansätze.

Multimodalität prüfen

Einige Modelle verarbeiten neben Text auch Bilder, Audio oder Dateien. Für Workflows mit Screenshots, Diagrammen oder Audio-Transkripten sind Vision-/Audio-Inputs wichtig. Strukturierte Outputs und Function Calling sind zentral für Agenten.

Benchmarks als Ausgangspunkt nutzen

GPQA, MMLU Pro und HLE messen Wissen und Reasoning. LiveCodeBench und SciCode testen Coding-Praxis. MATH 500 und AIME bewerten mathematisches Lösen. Vergleiche relevante Kategorien und teste zusätzlich mit eigenen Prompts.

Daten auf dieser Seite stammen von OpenRouter und Artificial Analysis. Preise, Geschwindigkeit und Benchmark-Scores werden regelmäßig aktualisiert. Du kannst jedes Modell sofort im kostenlosen Chat testen.